How Dashly failed 27 hypotheses in a month and got 2x leads growth

This is a story of how we built the growth team, embraced the art of growth hacking, and increased the number of qualified leads. If you want to hack growth and improve your company’s metrics, too, this article is a must-read.

Here is Polly. She is a growth marketing team lead at Dashly. Together we discussed her team’s workflow.

Polly told us why Dashly needed the growth team, how it was built, and how they tested growth hypotheses.

Hi, Polly 🙂 First, let’s figure out why Dashly needed the growth team and what it means.

Since the Dashly team adores new approaches and frameworks like OKR, Jobs to be Done, and design sprints, we couldn’t ignore the growth methodology.

Jokes aside, we recognized a tool to achieve the company goals in. Dashly has been on the market since 2014; we have over 5000 customers globally.

But we want to grow faster.

At the beginning of 2021, we aimed to increase our yearly revenue. Maintaining our existing processes was no longer enough. We needed to improve the funnel by acquiring more users, increasing the conversion rate to signup and payments, reducing churn, etc.

There are so many things to improve, but so little time. So, we devised an idea to systematically improve the product metrics framework KPIs.

We built an exceptional team and defined metrics for a clear focus to make work systematic and intensive.

The growth team helps the company hack growth. It identifies solutions that quickly improve the business’s key metrics: revenue, lead count, closed deals count, etc. The growth team hypothesizes how these metrics can grow and then validates them quickly; it helps identify successful hypotheses that you can scale while throwing out the unsuccessful ones.

Read also: Growth marketing vs performance marketing.

Thanks! Here’s your list of must-read books on growth hacking

What are the key metrics your team is working on?

The growth team focused on the Dashly sales funnel. Our key metrics are:

- demo request count;

- the number of completed demo sessions;

- the number of closed deals.

The platform implementation implies a team of an account manager, a copywriter, a designer, a layout designer, and an analyst. A manager strategizes how the customer’s goals can be achieved and develops, tests, and launches various triggered campaigns.

We are the most effective at helping our customers achieve their goals, not just a subscription to Dashly. Our experience with online stores, digital products, and schools helps us launch campaigns quickly to increase the conversion rate on our customers’ websites.

Read also: RevOps vs Sales Ops.

When did you start the team? What are your current outcomes?

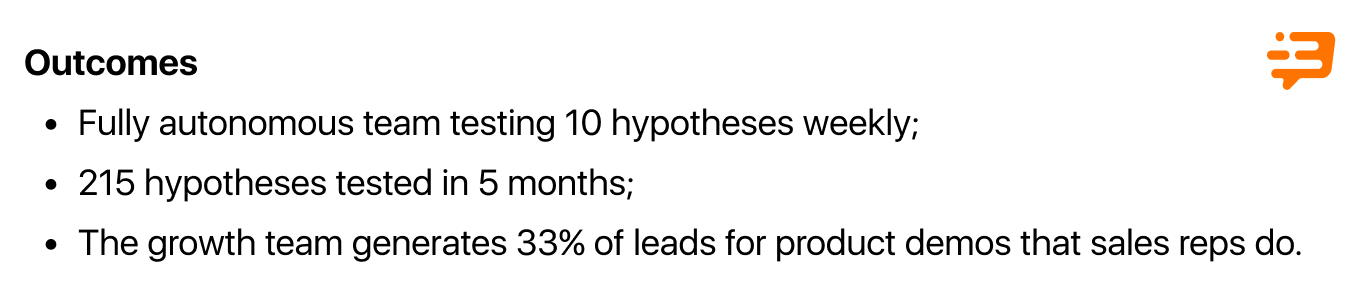

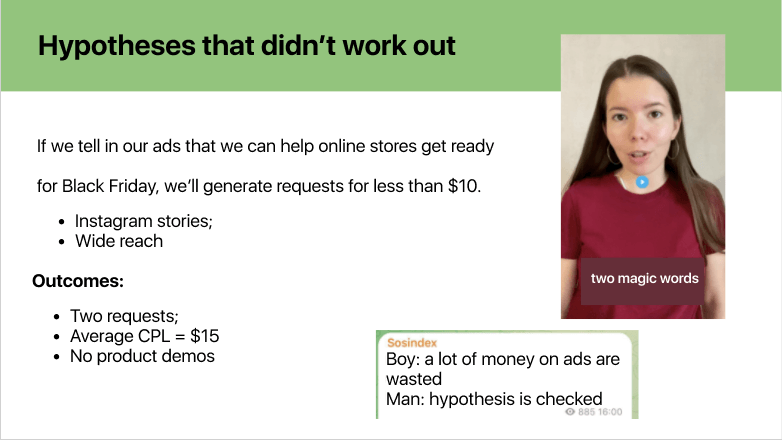

We’ve been working together as a team since May 2021. In our first month, we tested 36 hypotheses. 9 of which worked out, and 27 didn’t.

After two months, the growth team generated 33% of product demos. It means that sales reps did 33% of product demos for leads generated by the growth team.

We are currently testing ten hypotheses weekly as a minimum. The result is a list of qualified leads, so Dashly managers can sell our growth marketing services to them.

It proves that the conversion rate to product demos of the leads we generate is higher than that of users we don’t reach out to.

Thanks to scalable hypotheses, we doubled the number of qualified leads.

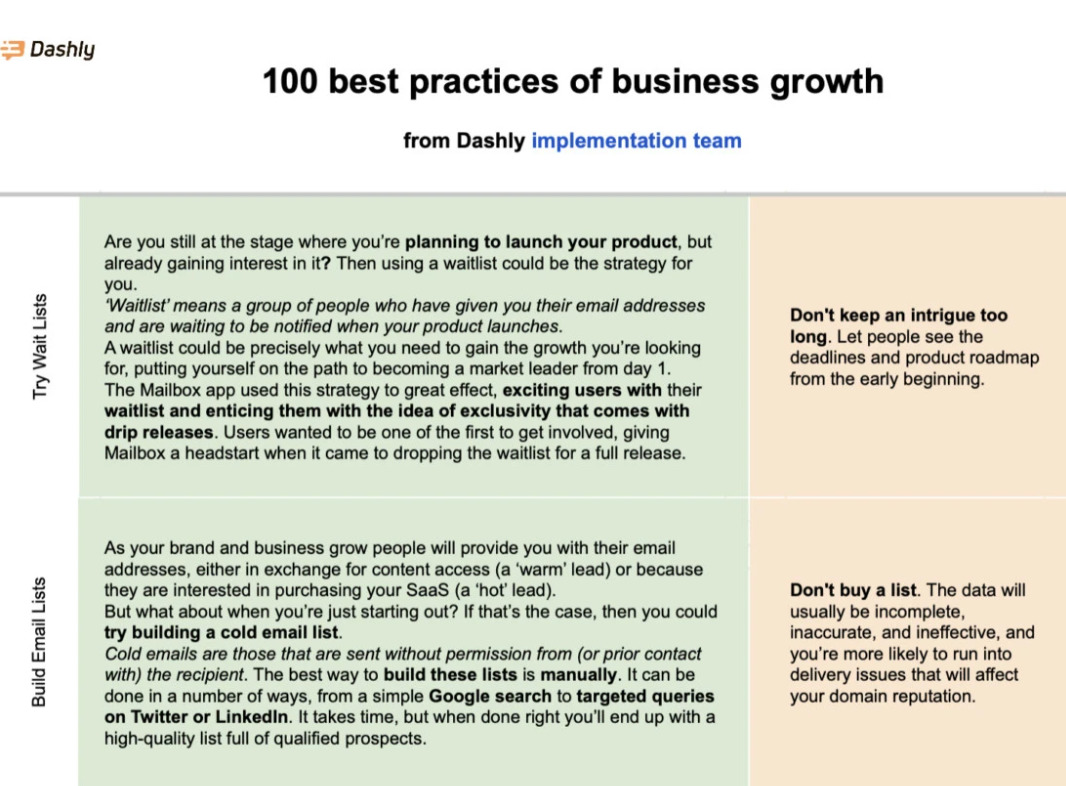

Thanks! Here’s your copy of 100 growth ideas

It’s a great outcome! Ok, now let’s discuss how you achieved that. How did you approach team building?

At first, we didn’t even realize what and how we should do 😀 We couldn’t even agree on what a hypothesis was for us, or what could or could not be one.

Let’s say we’re running Facebook ads: Is each offer a particular hypothesis or an ad group? We couldn’t agree on that.

It dawned on us that if we handled everything on our own, it would take us a long to get on the ground and achieve the goals we set. So this wasn’t an option for us.

We decided to reach out to someone who would explain it all to us and help us arrange the processes.

So, we asked Growth Academy, a hypothesis-testing school. Antony, a Growth Academy expert, became our consultant.

Antony told us we had to close specific roles, so we invited our colleagues with relevant experience to our team.

Each Monday for four weeks, we had meetings with Antony and chatted on Telegram. Antony taught us to state, discuss, and test hypotheses correctly. He also introduced all the necessary rituals to our team — meetings, hypothesis pitching — and taught us to exercise them correctly.

Antony consulted many companies; he has tons of visual experience and expertise. This consulting experience helped us accelerate and build up processes in our team.

Who is now on your team?

Our growth team has seven people: four FTEs (full-time employees), and we’re outsourcing three more from our marketing team.

The FTEs in our team are:

- Growth team lead — that’s me. I’m making up hypotheses and looking for insights to generate them from. I’m also responsible for engaging with other teams and setting the team’s direction.

- Scrum master — a person responsible for processes. He’s pinging us every day, so we don’t slow down testing; he also helps us deal with things stopping our processes, holds brainstorming sessions, and exercises all other rituals.

- Two PPC (pay-per-click) specialists running ad campaigns in various channels: Facebook, Instagram, TikTok, and Google Ads.

These are our teammates that we outsource from the marketing team:

- Marketer is a person with a “fresh view” and the production team lead (the production team is a part of a large marketing team). This person only attends our growth meetings, where we share our hypotheses and testing outcomes. Marketer can prompt us on how to improve the hypothesis or why it didn’t work out.

Our views often get blurred, and we get less objective.

A marketer knows how to work with various marketing channels and suggests channels to test a hypothesis. As a production team lead, they should be aware of the hypotheses tested because they will be responsible for scaling them if they work out.

- Designer creates visuals for ads and helps us create landing pages for tests.

- Analyst helps us track the key metrics, collects statistics before running hypotheses, calculates the sample and the test timing.

How is your work arranged now? Where does the hypotheses testing start?

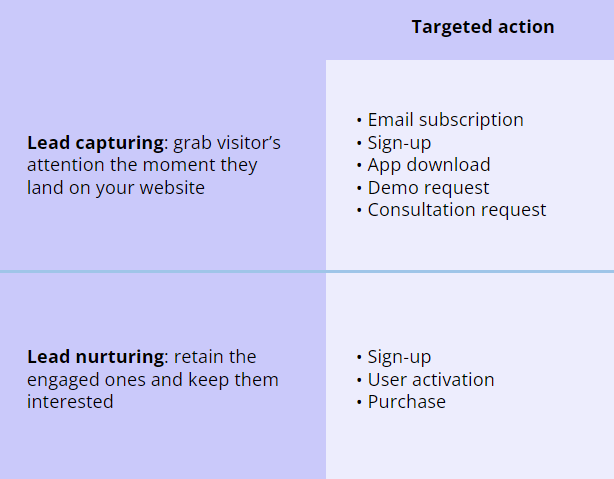

It starts with understanding your focus – where you are in the funnel, which stages you should work on to achieve the target metric. It will be a mistake to test hypotheses at all stages if you want to achieve tangible outcomes and, indeed “hack” growth.

As we achieve the target metric on our funnel stage, we can proceed to the next one.

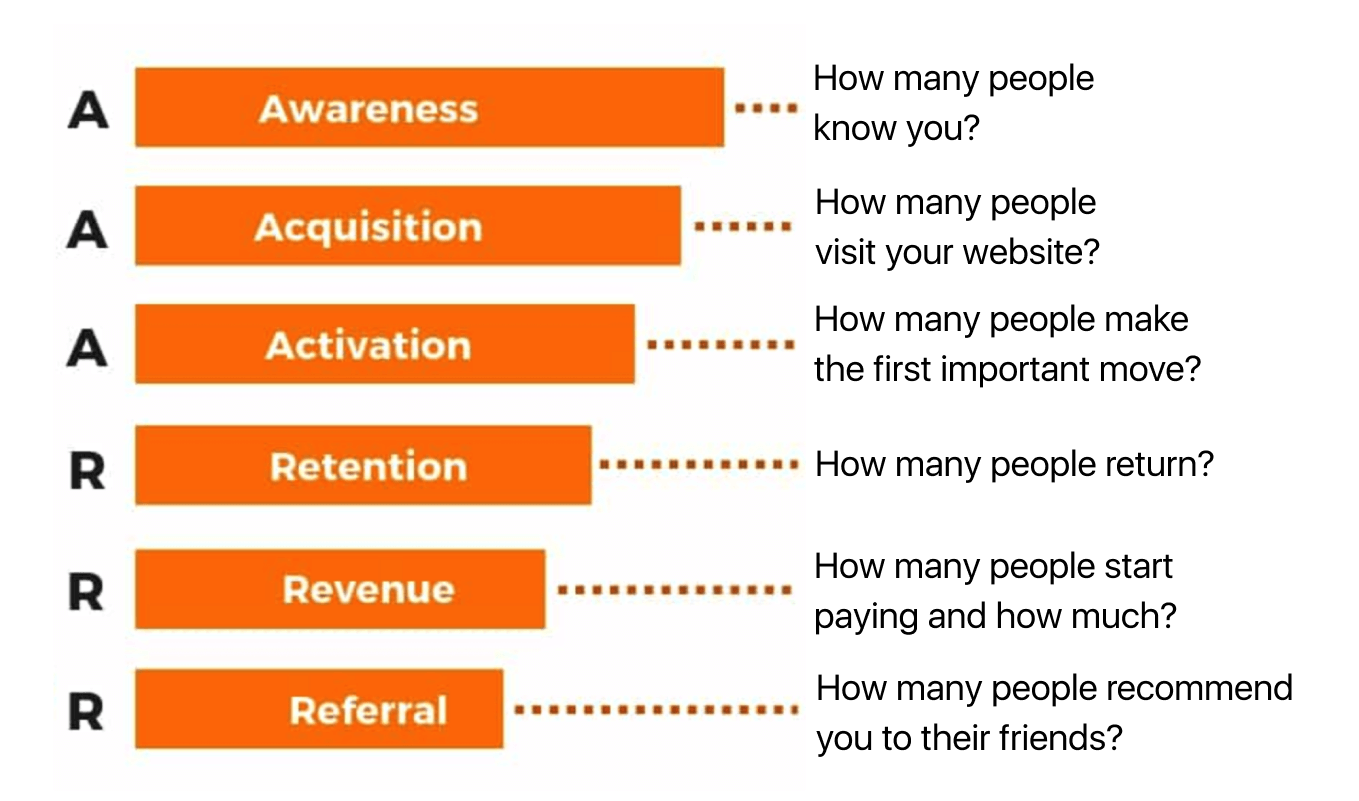

Thinking about the funnel stages, we use the AAARRR framework. It describes the six key product metrics: awareness, acquisition, activation, retention, revenue, and referral.

Based on the yearly strategic company goals we set using the OKR framework, we decide at the beginning of each quarter what funnel stage we’ll be working on. User activation is crucial for us — we want to increase the number of users making the first step in our product

Okay, I got it. What happens next? How do you make up hypotheses and test them?

Oooh, don’t even get me started here 🙂

We are working in weekly sprints that include six stages:

- hypotheses generation,

- hypotheses discussion,

- syncing up with related teams within a sprint,

- hypothesis production,

- testing,

- analytics.

Hypotheses generation. A growth hypothesis is a venturesome suggestion that users or customers will react in a certain way if we do something. And that will help us increase a specific metric by a certain value.

We state hypotheses by the “If…, then…” framework. After “If” we say what we want to do. After “then” we say what outcome we expect.

Let’s consider some examples:

If we run ads in Instagram stories for a broad audience with video success stories of digital schools increasing sales with our website chatbot, then we’ll generate 40 chatbot configuration requests.

If we change an offer on the landing page from “Product demo” to “Free lead generation audit”, then the conversion rate will increase by 10%.

During idea generation, each team member considers potential growth marketing tools and solutions they would like to try to influence our crucial metric and others.

Keep in mind that you can’t test on too small segments. You just won’t have statistically significant data, or you’ll have to prolong your tests to collect the statistically significant data.

By the way, you can’t scale these hypotheses. For example, let’s say you made up an excellent product demo offer for a too-narrow business segment; suppose there are only ten companies in the country. Of course, you can acquire all of them, but that’s it — the hypothesis is unscalable, and there’s no room for growth.

Here’s what helps us generate hypotheses ideas:

- analytics and research;

- competitor monitoring;

- other teams;

- product demo recordings with our sales reps.

Read also:

18 business growth experts you should follow this year

What are Growth Loops? How It Can Scale Your Company

Product Led Growth Marketing: hack your product growth

We need to answer some questions to turn an idea into a worthy hypothesis:

- How much time do we need to execute the hypothesis?

- How much money is required?

- How much time will the test take?

- What metrics will we assess at the end?

- What do we want to find out with this hypothesis?

- How will it influence a project or business?

- Where should we land our paid ads traffic?

- What creative inputs do we need?

These questions help you plan your hypotheses workflow and prioritize them. Prioritization enables you to select hypotheses for a sprint; the ones that require the least money, promise the highest profits, and think out the best comes first.

Need more inspo? Subscribe to a growth marketing newsletter for a regular portion of insights.

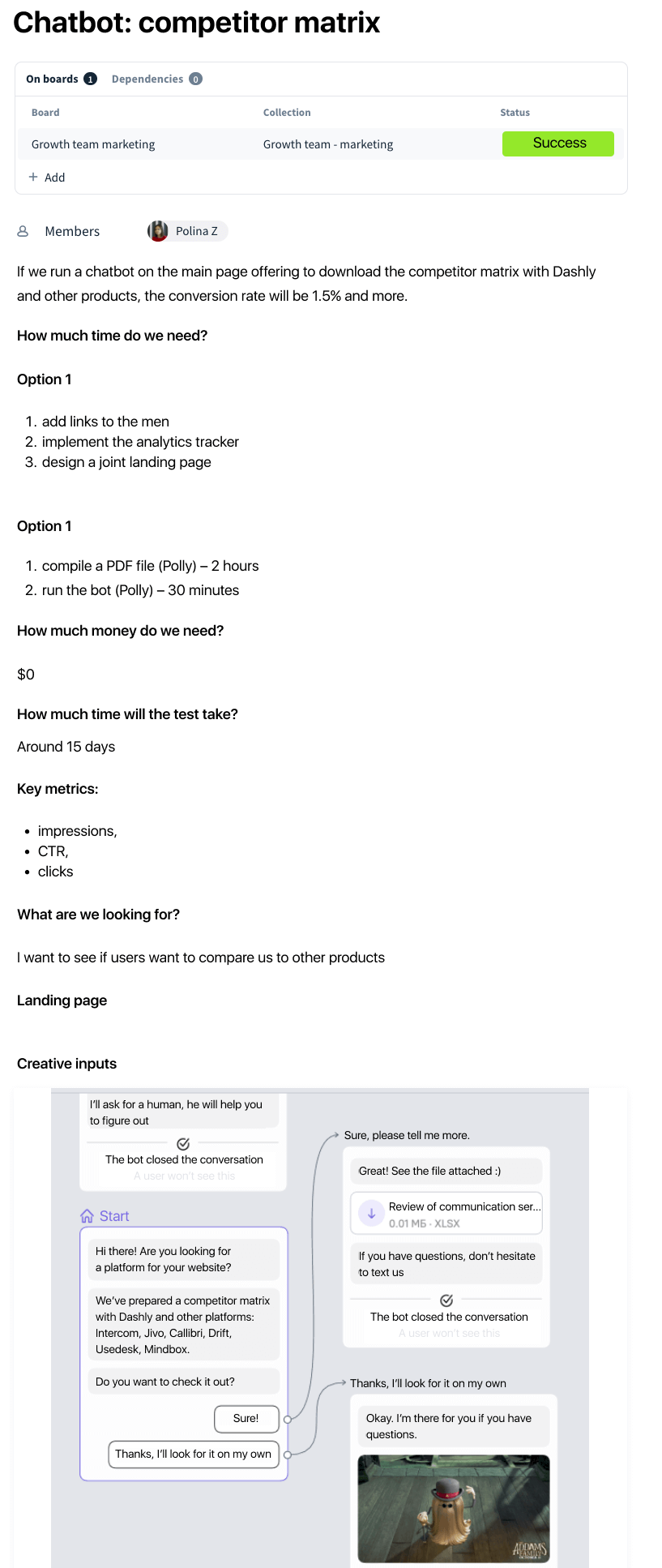

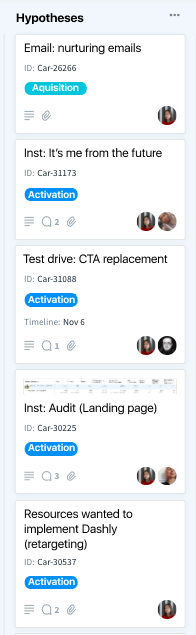

We put down all our hypotheses in Favro — it’s a project management platform. Each hypothesis deserves a card where we specify:

- channels where we plan to test a hypothesis;

- the main idea of a hypothesis;

- a funnel stage we’re going to improve;

- timing of the hypothesis test (start and end);

- answers to the questions I mentioned earlier;

- responsible employees.

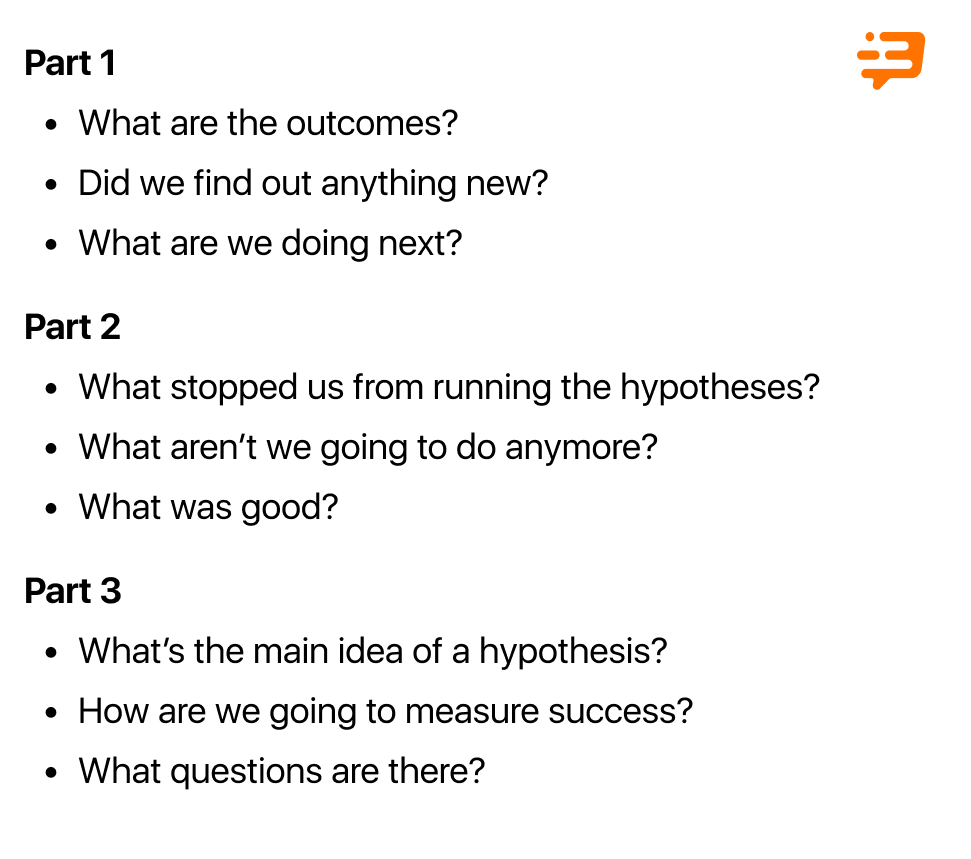

Hypotheses discussion. At the beginning of each spring, we hold a growth meeting that lasts around two hours on Mondays. Our four growth team FTEs and a marketer from the marketing team attend it. Growth meetings have three stages.

- In the first one, we discuss hypotheses outcomes from our last sprint; what we want to improve and scale, and what we want to reject.

- In the second one, we’re doing a sprint retrospective. We exchanged our views on what was good and bad during our last sprint, what we could improve, what stopped us from running hypotheses, and how to avoid obstacles in our next sprint.

- The third stage is hypotheses pitching. Each team member has 1.5 minutes to present their hypotheses and why they want to test it: its central idea and how success will be measured. Then, the team discusses the hypothesis for the next 1.5 minutes. Thus, a person has 3 minutes to “sell the idea” — persuade the team to test a certain hypothesis.

If you can’t “sell the idea”, don’t include it in a sprint.

A person can fine-tune it for the next growth meeting and present it again to convince teammates to test it during a sprint.If you need more ideas for your growth strategy that will be easier to “sell”, we’re here to help 👇

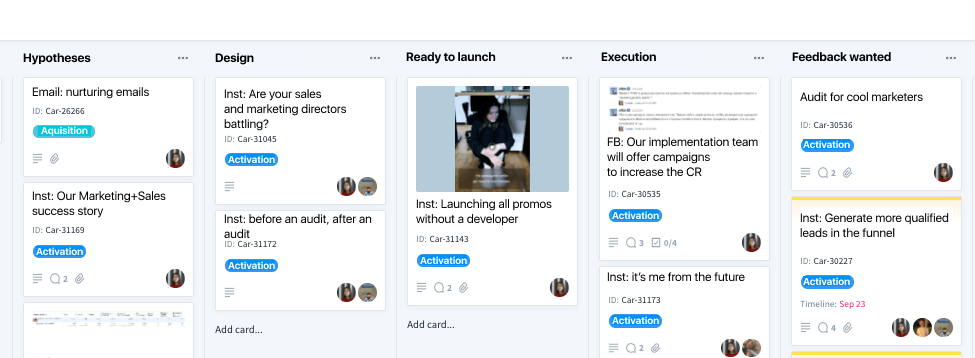

After a growth meeting, we have our sprint plan — the list of 10-15 hypotheses we’re planning to test during a week. We’re putting them down on a board in Favro.

Then, we agree on who’s responsible for what hypothesis. Often it’s who makes it up. Sometimes we delegate it to someone who knows better how to test it. For example, if I make up a hypothesis on the Facebook ads test, I’ll delegate it to a PPC specialist with relevant expertise.

Sync up with related teams. Our goal is to inform teams that hypotheses tests may influence their workflows, or we will need their assistance.

Let’s say we’re testing a product demo request hypothesis. We inform the sales team that they will have more leads coming from a particular ad campaign and a specific date, and they will have to call them back.

Read also: Your Growth Marketing Strategy Template with guide and examples

Production and testing. We can allocate a maximum of six hours to launch a hypothesis. That’s why we should always aim to speed up, whether about creative inputs, running a hypothesis, or collecting statistically significant data.

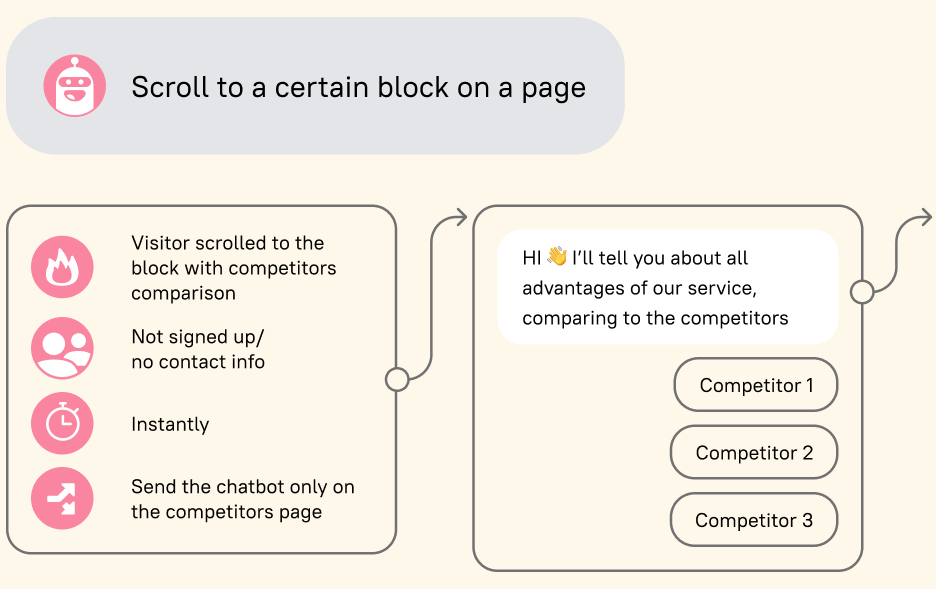

Here’s where we find the MVP product approach handy. We’re delivering a minimum viable product that allows us to see if a hypothesis works. We’re not developing it all from top to bottom.

Let’s say we want to introduce chatbot campaign templates in Dashly. We believe this will help us acquire new product users. So, we need to validate this idea. We don’t develop the campaigns because engaging copywriters, designers, and developers are costly and time-consuming at this stage. We do a few visuals and offers and test them in Facebook ads using a fake account.

You can tell from the CTRs of these ads if users are.

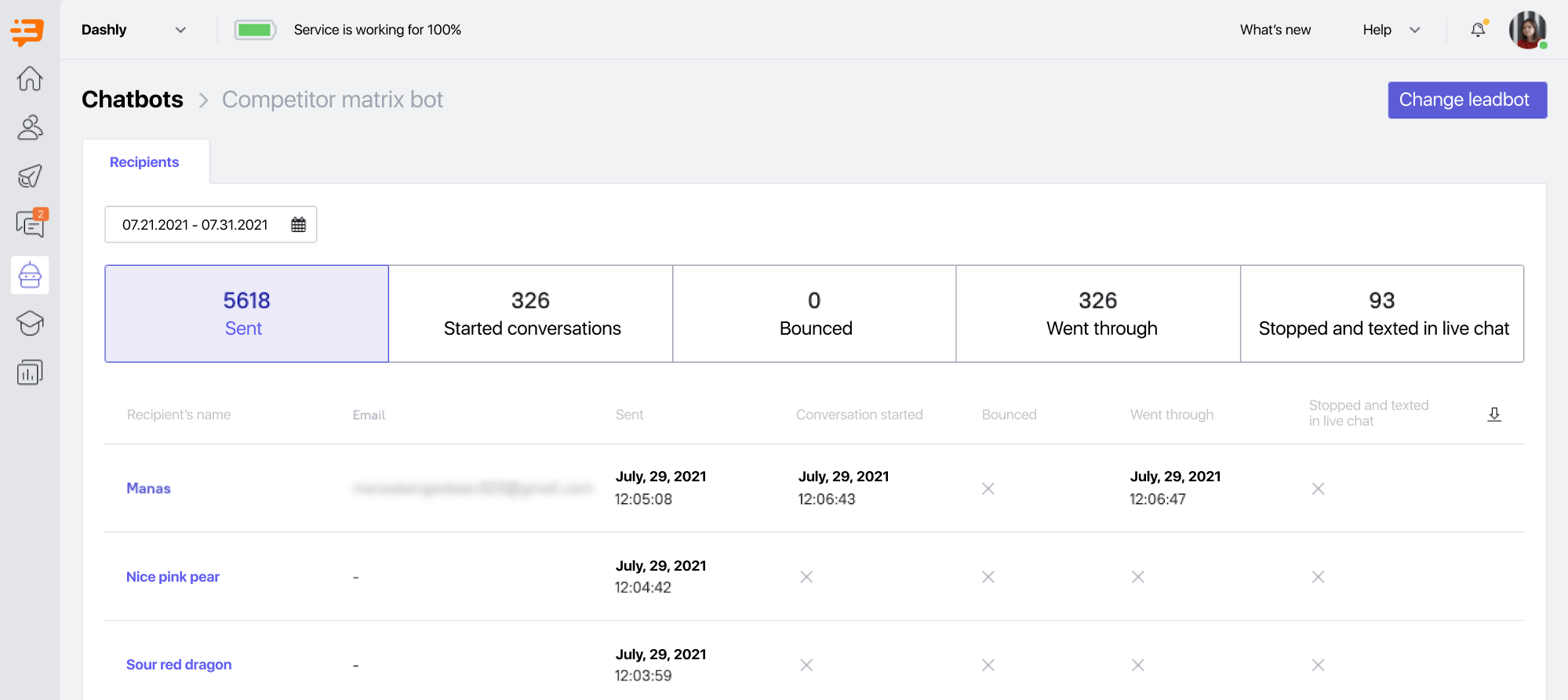

If we want to test a video, we shoot a simple selfie video. If we’re going to test a whole landing page, we design it using the builder. If we’re going to see if we should do an Intercom competitors matrix, we create a table in Google Sheets from scratch and offer it to the audience using our chatbot. And just like that with all other things.

The MVP approach helps us avoid unworthy funding projects.

During tests, we set the status of each hypothesis on the board in Favro. Then, we hold stand-ups each morning to check on each hypothesis, its testing progress, and possible obstacles. It helps us identify challenges quickly.

Analytics. When a test is over, it’s time to analyze the outcomes and the key metrics we listed when stating a hypothesis.

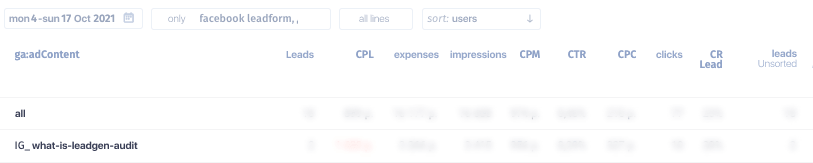

We use our dashboard in Rick — the end-to-end analytics platform — to see the key metrics.

In Rick, we can see the whole picture: from an ad campaign that hooked a user and how much it cost us to a point where a user converted to a product demo from our sales, paid for the implementation of a plan in the product and started using the product. This 360-degree view helps us see the value we bring to the company, evaluate our performance, and calculate the return rate of our investments in paid ads and the team.

We also use Dashly for analytics, as we test many of our hypotheses. For example, we monitor our email and chatbot metrics there.

After a test, we collect as many metrics as possible because we need to see the reasons a hypothesis worked out or didn’t. The figures help you decide if you should test a hypothesis again. For example, we test an email campaign with an invitation to our event; we want to generate 30 registrations, but we only get 5.

Looking at more campaign metrics, I can see that the open rate was only 3% — so most recipients didn’t even see our offer. If so, we should test the campaign again, but with a more appealing email subject.

Get your free example of growth marketing playbook with 40+ templates for successful experiments

What happens after you test a hypothesis?

If a hypothesis worked out, we would scale it in paid channels or pass it to another team, like production or editors. Our teammates would fine-tune it, design it and roll it out to the public.

If it didn’t work out, and we know we did our best with it, we reject it.

We share the outcomes of all hypotheses with the marketing team; we tell them what they should and shouldn’t do. Then, once a month, we talk about the most exciting hypotheses at the whole company stand-ups.

It’s amazing! But it all looks so smooth. You decided to team up, succeeded, and fine-tuned the processes. Didn’t you face any challenges on your way?

Of course, we did 🙂 This growth team is our second attempt to create one.

Around one and a half years ago, we attempted to team up for the first time.

And we failed.

At that moment, only two people worked in the team. They worked part-time in the growth team and part-time in other Dashly teams.

The guys chaotically tested hypotheses on different funnel stages with no focus on some key metric. That’s why it was hard for them to evaluate their performance; when you test everything everywhere, you simply can’t tell whether tests work out.

As a result, you can’t see the growth.

That team worked for two months and tested ten hypotheses. But, then, the guys didn’t have time for that and the team split.

We concluded the two things from that experience:

- Separate a team to work on your growth systematically.

- Focus on one key metric.

With that in mind, let’s wrap up our conversation with some advice for growth team beginners. What would you suggest?

- Find at least one ally in a company you would discuss hypotheses with. This would be enough for a beginning.

- Identify one metric you’ll be working on, and make sure your trackers allow you to see how your tests influence it. It’s essential for the metric to be related to money; this will mean that you’re affecting the company’s financial results and help you prove the value of growth. When you show that tests generate the company X dollars, this may be an aha moment for everyone, and they will believe in growth. In addition, you’ll get more resources: team investments, more flexible timing, and the budget for tests.

- Learn from hypotheses that didn’t work out. Failure is okay, but you should train your internal neural network to make better decisions.

And the central point: don’t be looking for a magic growth hack. Build a growth process instead!

Great! Thank you so much for sharing it with us in detail. It was so exciting. Now, let’s look forward to questions from our users.

This was only a part of what Polly shared, and more posts on growth processes are coming. Stay tuned to get our blog updates!

Thanks! Here’s your copy of the growth strategy template

Read also:

- 25 Growth Marketing Books to Skyrocket Success

- 22 SaaS growth hack Facebook tactics to boost your business

- Skyrocket your company revenue with a complete guide to RevOps Revenue Operations

- RevOps tech stack: Guide to the best tools

- Revenue operations metrics: 10 metrics and KPIs to track your performance

- B2B growth marketing: accelerating business success

- Demand Generation vs Growth Marketing: Where to focus?

- Product led growth metrics: 13 key indicators for SaaS companies to track

- PLG tools: Ultimate guide to the best instruments

- Benefits of Product led growth: 12 PLG benefits for your business

- Top 10 product led growth software your competitors use in 2023

- 10 product led growth companies that boost their development right now

- Sales led growth: What is it and why your business needs it

- Growth marketing case studies: 12 stories with detailed tactics and numbers achieved

- Growth marketing framework: Battle-tested insights from Dashly experts

![Build Ideal Customer Profile Like a Pro Even If You’re Not [3 Templates]](https://www.dashly.io/blog/wp-content/uploads/2021/03/ideal-customer-profile-4-720x308.jpg)